Intro

Being in the Software Security Training business, we regularly hear about 'secure code training,' and it's a phrase that I find limiting and deeply flawed. It assumes that software security is about training engineers to 'write secure code,' and while that's a part of what we need to improve on, it is just a small piece of a much larger puzzle. Software is not just engineers writing code, which is just one group in one phase of the entire process.

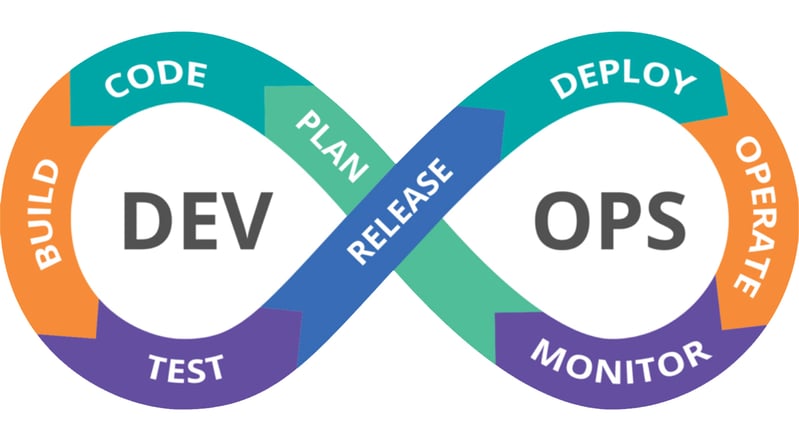

To make software more secure and truly reduce risk, a spectrum of people, processes, and technology must be addressed no matter the development process used. We must ensure people performing other roles such as design, architecture, test, deployment, operations, product management, etc., also have the training they need to bring software security to their roles. Vulnerabilities are not just introduced in coding. We need to address the processes that turn DevOps into SecDevOps or that bring security to more traditional approaches that are still used across many organizations. In addition, we need to make the training appropriate not just to role and process but to the technologies, whether the languages and frameworks of the code or the infrastructure in which it runs (cloud, IoT…).

How ‘Secure Code Training’ Leaves Orgs Vulnerable & What To Do About It

Addressing software security requires more than just the ‘secure code training’ approach that many organizations use and vendors sell. This webinar will help you understand why you need to go beyond the code to keep your organization’s software secure, and how to do it.

Finally, the training must be put together in a way that it will be adopted and change behavior. It must provide knowledge but also include hands-on games and exercises that go beyond teaching information to build skills that apply that knowledge and give motivation that changes behavior.

Beyond The Engineering Role

Many vulnerabilities are introduced into software by people, beyond the engineers, in the software development lifecycle (SDLC). Designers must practice secure design patterns such as threat modeling to ensure the application design considers the right security capabilities. Testers must test for security vulnerabilities as code moves to them. Deployment must ensure the configuration of the infrastructure is secure and up-to-date on patches, etc. It is not just the code writers that must follow secure practices. If they are the only ones trained on them, the application will contain avoidable security vulnerabilities and increased risk.

In the table below, I mapped the OWASP Top 10 to phases in the development lifecycle where that specific vulnerability should be considered and prevented. By extension, you can apply that to roles. We overemphasize the OWASP Top 10, but it is a common reference, making it a relevant way to illustrate the point. Looking at the table, I am sure many experts could quibble with a few entries. But the idea is to illustrate the fact that vulnerabilities must be addressed in all phases of the SDLC across all roles in the software creation process.

In the first line, Broken Access Control, you can see that all phases and all roles must pay attention to this. For example, in the requirements phase, the roles and rights used must be properly laid out for least privilege. Then in design, the mechanism for enforcement must not have vulnerabilities that allow bypassable access control. Then it must be properly coded, and QA must test for Access Control vulnerabilities. Deployment must also make sure access control on infrastructure is set properly. And finally, monitoring must watch for log entries that indicate data is improperly accessed. As you can see, just training coders on this vulnerability can still leave you incredibly exposed.

Beyond Coding Vulnerabilities

If you start looking for 'secure code training' and talk to organizations and look at their demos, you will almost always see the same one or two examples – Cross-Site Scripting or SQL Injection. This is not a coincidence. These are two basic vulnerabilities that are easy to understand and generally easily fixed or prevented using a few specific coding techniques. They lend themselves to the 'secure code training' model. But, as you can see from the example above, looking at a different vulnerability like A1 Broken Access Control, you only address a small portion of the problem if you just conduct secure code training.

In fact, three of the 2021 OWASP Top 10 are now not coding related at all.

- A4: Insecure Design is new on the 2021 list. OWASP specifically talks about moving left and including activities such as threat modeling and reference architecture. The OWASP Top 10 site states, "An insecure design cannot be fixed by a perfect implementation as by definition, needed security controls were never created to defend against specific attacks."

- A5: Security Misconfiguration moved up from sixth on the previous list. How many data disclosures were caused by an open cloud storage bucket? One example was the recent case where Securitas exposed 3TB of airport employee records this way. No amount of secure code training would have prevented this. Per the Sonatype State of Cloud Security Report, "Misconfigurations represent the number one risk for every organization using the cloud."

- A6: Vulnerable and Outdated Components – I did include this for developers on the table to keep it expansive, as sometimes this is code components that developers are responsible for, as in the Log4j debacle. This could require a developer updating the component or a patch applied via other means if it runs as a separate module, as is often the case for Log4j. Per ZDNet, "Months on from a critical zero-day vulnerability disclosed in the widely-used Java logging library Apache Log4j, a significant number of applications and servers are still vulnerable to cyberattacks because security patches haven't been applied."

Beyond Vulnerability Checklist Thinking

There is a need to change the basic approach from teaching about vulnerabilities to teaching about the full spectrum of creating secure software, with training dedicated to the specific needs of each role and their part in each phase. Another example of this also jumps out from the table below. If you look across, A1 and A7 jump out as having implications across all phases, but if you look at the columns, you will see that testing jumps out as having implication for all the vulnerabilities. This makes sense as testing is the only way to find vulnerabilities that may have been left, just as it is the way to find other types of bugs in the software. Like functional testing that goes beyond the code (performance, stability, usability, etc.), testing for security involves testing for openings beyond simple coding vulnerabilities.

For example, it is essential to test deployed software and its infrastructure in place (or at least in staging for cloud environments) to find non-code vulnerabilities, like the aforementioned open access data buckets. To achieve this, the people performing the tests must be trained for these vulnerabilities, another example of going beyond code. They must learn to think like a hacker and know the techniques hackers might use on software that exploits whatever might be available, not just a checklist of specific vulnerabilities.

OWASP Top 10 2021 Mapped Against SDLC / Roles

| OWASP Item | Requirement | Design | Coding | Testing | Deployment | Operations |

| A1 Broken Access Control | ||||||

|

A2 Cryptographic Failures |

||||||

| A3 Injection | ||||||

|

A4 Insecure Design |

||||||

| A5 Security Mis-configuration | ||||||

|

A6 Vulnerable |

||||||

| A7 ID and Authentication Failures | ||||||

| A8 Software and Data Integrity Failures | ||||||

| A9 Security Logging and Monitoring Failures | ||||||

| A10 Server-Side Request Forgery |

Conclusion

As you can see from the table above, just training engineers on secure coding will miss many places in the software creation process where vulnerabilities could be introduced or addressed. Even a list as common as the OWASP Top 10 contains some vulnerabilities irrelevant to coding. All vulnerabilities need to be addressed within phases of the SDLC beyond just the coding.

About Fred Pinkett, Senior Director Product Management

Fred Pinkett is the Senior Director of Product Management for Security Innovation. Prior to this role, he was at Absorb, Security Innovation's learning management system partner. In his second stint with the company, he is the first product manager for Security Innovation's computer-based training. Fred has deep experience in security and cloud storage, including time at RSA, Nasuni, Core Security, and several other startups. He holds an MBA from Boston College and a BS in Computer Science from MIT. Working at both Security Innovation and Absorb, Fred clearly can't stay away from the intersection between application security and learning. Connect with him on LinkedIn.